1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

|

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

logs_path = "five_layers_log"

batch_size = 100

learning_rate = 0.003

training_epochs = 10

mnist = input_data.read_data_sets("../data", one_hot=True)

x = tf.placeholder(tf.float32, [None, 784])

y = tf.placeholder(tf.float32, [None, 10])

first_layer = 200

second_layer = 100

third_layer = 60

fourth_layer = 30

fifth_layer = 10

W1 = tf.Variable(tf.truncated_normal([784, first_layer], stddev=0.1))

B1 = tf.Variable(tf.zeros([first_layer]))

W2 = tf.Variable(tf.truncated_normal([first_layer, second_layer], stddev=0.1))

B2 = tf.Variable(tf.zeros([second_layer]))

W3 = tf.Variable(tf.truncated_normal([second_layer, third_layer], stddev=0.1))

B3 = tf.Variable(tf.zeros([third_layer]))

W4 = tf.Variable(tf.truncated_normal([third_layer, fourth_layer], stddev=0.1))

B4 = tf.Variable(tf.zeros([fourth_layer]))

W5 = tf.Variable(tf.truncated_normal([fourth_layer, fifth_layer], stddev=0.1))

B5 = tf.Variable(tf.zeros([fifth_layer]))

XX = tf.reshape(x, [-1, 784])

Y1 = tf.nn.sigmoid(tf.matmul(x, W1) + B1)

Y2 = tf.nn.sigmoid(tf.matmul(Y1, W2) + B2)

Y3 = tf.nn.sigmoid(tf.matmul(Y2, W3) + B3)

Y4 = tf.nn.sigmoid(tf.matmul(Y3, W4) + B4)

Ylogits = tf.matmul(Y4, W5) + B5

Y = tf.nn.softmax(Ylogits)

cross_entropy = tf.nn.softmax_cross_entropy_with_logits(logits=Ylogits, labels=y)

cross_entropy = tf.reduce_mean(cross_entropy)*100

correct_prediction = tf.equal(tf.argmax(Y, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

train_step = tf.train.AdamOptimizer(learning_rate).minimize(cross_entropy)

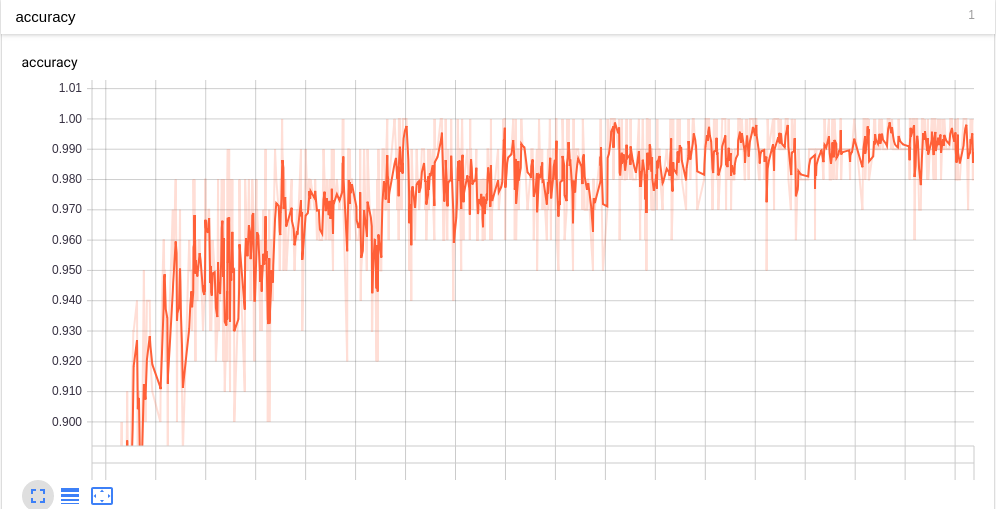

tf.summary.scalar("cost", cross_entropy)

tf.summary.scalar("accuracy", accuracy)

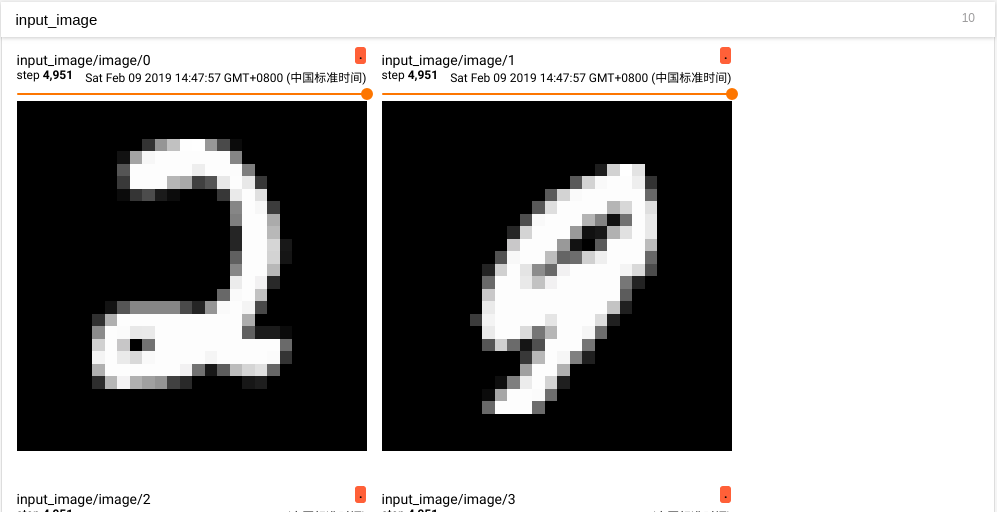

tf.summary.image("input_image", tf.reshape(x, [-1, 28, 28, 1]), 10)

summary_op = tf.summary.merge_all()

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

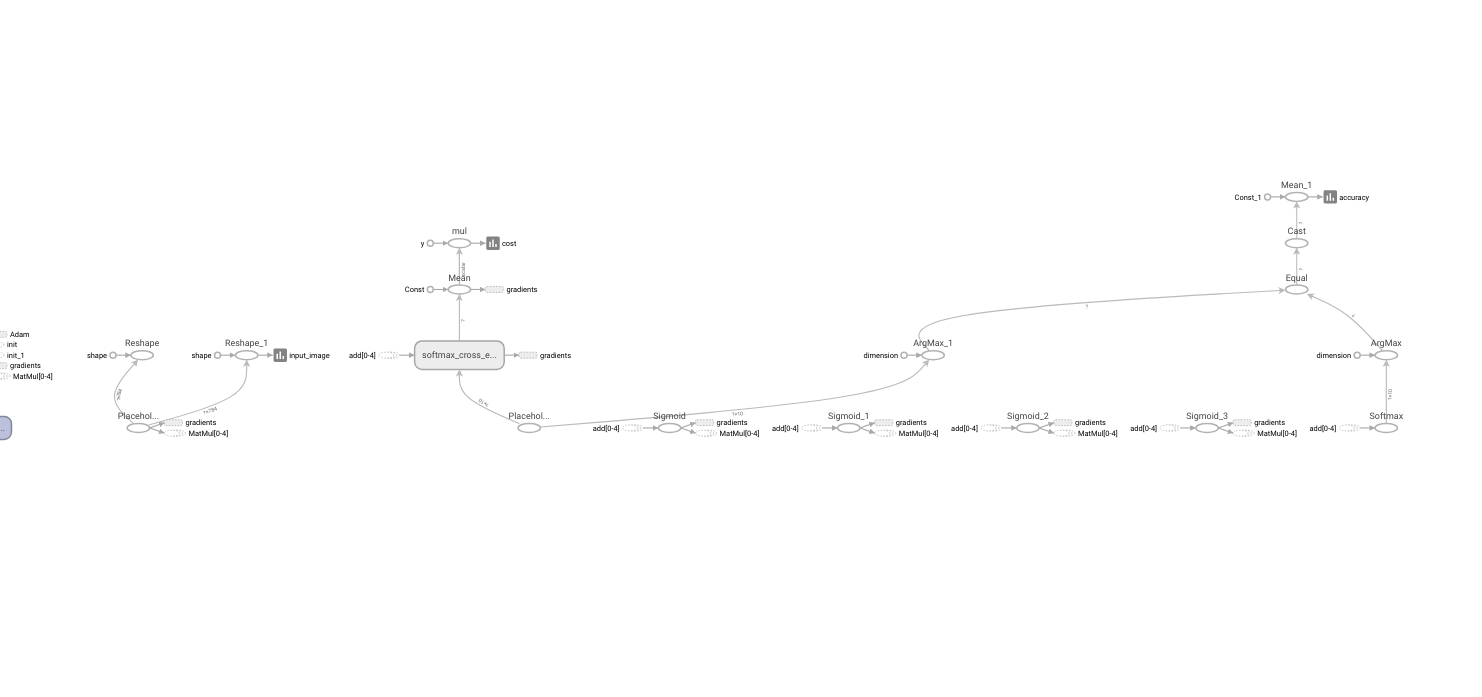

writer = tf.summary.FileWriter(logs_path, graph=tf.get_default_graph())

for epoch in range(training_epochs):

batch_count = int(mnist.train.num_examples/batch_size)

for i in range(batch_count):

batch_x, batch_y = mnist.train.next_batch(batch_size)

_, summary = sess.run([train_step, summary_op], feed_dict={x: batch_x, y: batch_y})

writer.add_summary(summary, epoch*batch_count+1)

print("Epoch", epoch)

print("Accuracy", accuracy.eval(feed_dict={x: mnist.test.images, y: mnist.test.labels}))

print("Done")

|